31. Discrete event simulation

Running simulations in realtime gives us an experience which is very similar to working with a real Unet, as we have seen in earlier chapters. This is very useful when you want to interact with the network manually, through a shell. However, as a network designer or protocol developer, you may sometimes need to run simulations to see how the network performs over days or months, and maybe run many such simulations, each with slightly different network settings or configuration. Doing this with a realtime simulator is impractical, as a realtime simulation would take days or months to run. The Unet simulator can be run in a discrete event mode, where the waiting time between events is fast-forwarded to yield results worth hours or days of real time within minutes. In this chapter, we explore how to use the disrete event mode for simulation of protocol performance.

31.1. ALOHA performance analysis

The hello world of the networking world is the ALOHA MAC protocol. The protocol is very simple: transmit a frame as soon as data arrives, without worrying about whether any other node is transmitting. While this behavior is straightforward to describe, simulating it accurately requires some thought.

Let’s say we want to simulate a network with ALOHA MAC. On each node, we expect data to arrive randomly, with a known average arrival rate. The total number of data "chunks" arriving per unit time across the network is termed as offered load . As soon as a data chunk arrives, the node transmits it to a randomly chosen destination node (other than itself). The number of successfully delivered data chunks per unit time, across the entire network, is called the throughput . We are interested to study how the throughput varies as a function of offered load.

ALOHA has been extensively studied in literature, and its theoretical performance is well known. In order to simulate a network that can be compared against theory, we need to ensure that our simulation matches the assumptions made in the theoretical derivations:

-

The random arrival process follows a Poisson distribution.

-

If two frames arrive at a receiver with some overlap in time, they collide and are lost. Neither frame can be successfully decoded.

-

Each node is half-duplex, i.e., it cannot receive a frame while it is transmitting.

-

No frames are lost due to noise or channel effects such as multipath.

-

There is no propagation delay between nodes.

Assumptions 2 and 4 together form a model called the protocol channel model. We tell the simulator to adopt this model:

channel.model = ProtocolChannelModel

Underwater acoustic modems are usually half-duplex, and the default modem model in the simulator is the

HalfDuplexModem

, so we shouldn’t need to do anything special for assumption 3. However, the

HalfDuplexModem

is smart enough to delay a transmission if another frame is being transmitted or received by the node, to avoid losing the other frame. While this is usually a good thing to do, it will violate assumption 3 and give us results that don’t agree with theory. To match the theoretical behavior, we have to stop any ongoing transmission or reception, when new data arrives for transmission:

phy << new ClearReq() // stop ongoing transmission/reception

phy << new TxFrameReq(to: dst, type: DATA) // transmit a data frame to dstThe offered load and throughput are usually normalized by the number of frames that can be supported by the channel per unit time. By setting the frame duration to be one second, we ensure that the normalization factor is 1 (one packet can be transitted per second without collision). To do this, we set up the simulated modem to have no header/preamble overheads, and exactly 1 second worth of data that it can carry in a frame:

modem.dataRate = [2400, 2400].bps // arbitrary data rate

modem.frameLength = [2400/8, 2400/8].bytes // 1 second worth of data per frame

modem.headerLength = 0 // no overhead from header

modem.preambleDuration = 0 // no overhead from preamble

modem.txDelay = 0 // don't simulate hardware delays

You can read more about

modem

models in

Section 32.1

.

|

A Poisson process (assumption 1) is easily simulated using the

PoissonBehavior

available in fjåge. Assumption 5 can also be easily met by placing all nodes at the same location.

Now let’s put a first version of our script together to simulate a 4-node ALOHA network:

import org.arl.fjage.*

import org.arl.unet.*

import org.arl.unet.phy.*

import org.arl.unet.sim.*

import org.arl.unet.sim.channels.*

channel.model = ProtocolChannelModel // use the protocol channel model

modem.dataRate = [2400, 2400].bps // arbitrary data rate

modem.frameLength = [2400/8, 2400/8].bytes // 1 second worth of data per frame

modem.headerLength = 0 // no overhead from header

modem.preambleDuration = 0 // no overhead from preamble

modem.txDelay = 0 // don't simulate hardware delays

def nodes = 1..4 // list with 4 nodes

def load = 0.2 // offered load to simulate

simulate 2.hours, { // simulate 2 hours of elapsed time

nodes.each { myAddr ->

def myNode = node "${myAddr}", address: myAddr, location: [0, 0, 0]

myNode.startup = { // startup script to run on each node

def phy = agentForService(Services.PHYSICAL)

def arrivalRate = load/nodes.size() // arrival rate per node

add new PoissonBehavior((long)(1000/arrivalRate), { // avg time between events in ms

def dst = rnditem(nodes-myAddr) // choose destination randomly (excluding self)

phy << new ClearReq()

phy << new TxFrameReq(to: dst, type: Physical.DATA)

})

}

}

}

// display collected statistics

println([trace.txCount, trace.rxCount, trace.offeredLoad, trace.throughput])

The script is easy to understand. In a 2-hour long simulation, we iterate over the list of nodes, and create each node at the origin. Each node adds a

PoissonBehavior

to generate random traffic at a rate corresponding to the offered

load

setting. The parameter of the Poisson behavior is the average time between events in milliseconds, which we compute based on the arrival rate. The destination for each transmission is randomly chosen from the list of nodes excluding the transmitting node. Once the simulation is completed, statistics are printed. The

trace

object is automatically defined by the simulator to collect typically required statistics.

The

rnditem(list)

function allows a random item to be chosen from a list. Other convenience functions related to random number generation include

rnd(min, max)

which generates a uniformly distributed random number between

min

and

max

, and

rndint(n)

which generates a uniformly distributed random number between

0

and

n-1

.

|

Open Unet IDE (

bin/unet sim

), create a new simulation script in the

scripts

folder, copy this code in, and run it. Within a few seconds, you should see the results:

[1459, 984, 0.2026, 0.1367]

1 simulation completed in 2.817 secondsSince this is a Monte-Carlo simulation driven by a random number generator, the statistics you see will be similar, but not identical. A total of 1459 frames were transmitted, and 984 of them were successfully received. The measured offered load was 0.2026, and the throughput was 0.1367.

Hang on a minute! The simulation was meant to run for 2 hours, but it finished in less than 3 seconds!!

That’s because we ran the simulation in a discrete event simulation mode (it is the default mode, if we don’t set

platform = RealTimePlatform

). We could have explicitly set it (

platform = DiscreteEventSimulator

), if we wanted. Now that we can run hours worth of simulations in seconds, we can go ahead and measure ALOHA throughput at various load settings:

import org.arl.fjage.*

import org.arl.unet.*

import org.arl.unet.phy.*

import org.arl.unet.sim.*

import org.arl.unet.sim.channels.*

println '''

Pure ALOHA simulation

=====================

TX Count\tRX Count\tOffered Load\tThroughput

--------\t--------\t------------\t----------'''

channel.model = ProtocolChannelModel // use the protocol channel model

modem.dataRate = [2400, 2400].bps // arbitrary data rate

modem.frameLength = [2400/8, 2400/8].bytes // 1 second worth of data per frame

modem.headerLength = 0 // no overhead from header

modem.preambleDuration = 0 // no overhead from preamble

modem.txDelay = 0 // don't simulate hardware delays

def nodes = 1..4 // list with 4 nodes

trace.warmup = 15.minutes // collect statistics after a while

for (def load = 0.1; load <= 1.5; load += 0.1) {

simulate 2.hours, { // simulate 2 hours of elapsed time

nodes.each { myAddr ->

def myNode = node "${myAddr}", address: myAddr, location: [0, 0, 0]

myNode.startup = { // startup script to run on each node

def phy = agentForService(Services.PHYSICAL)

def arrivalRate = load/nodes.size() // arrival rate per node

add new PoissonBehavior((long)(1000/arrivalRate), { // avg time between events in ms

def dst = rnditem(nodes-myAddr) // choose destination randomly (excluding self)

phy << new ClearReq()

phy << new TxFrameReq(to: dst, type: Physical.DATA)

})

}

}

} // simulate

// tabulate collected statistics

println sprintf('%6d\t\t%6d\t\t%7.3f\t\t%7.3f',

[trace.txCount, trace.rxCount, trace.offeredLoad, trace.throughput])

} // for

Other than the pretty printing to tabulate the output, you’ll see that we have added a

trace.warmup

time. This is to ensure that we only collect statistics after the simulation has reached steady state (in this case, after 15 minutes of simulation time).

A slightly beautified copy of the above code is available in the

samples/aloha.groovy

script. You can either run that, or run the above code. You should see something like this output:

Pure ALOHA simulation

=====================

TX Count RX Count Offered Load Throughput

-------- -------- ------------ ----------

614 525 0.068 0.058

1228 962 0.137 0.107

1871 1249 0.209 0.139

2480 1407 0.277 0.156

3093 1535 0.347 0.171

3759 1616 0.421 0.180

4273 1665 0.479 0.183

4971 1599 0.558 0.178

5540 1605 0.622 0.178

6256 1532 0.702 0.170

6940 1375 0.783 0.153

7338 1407 0.826 0.156

7992 1338 0.904 0.149

8598 1282 0.972 0.142

9394 1048 1.062 0.116

15 simulations completed in 102.494 seconds

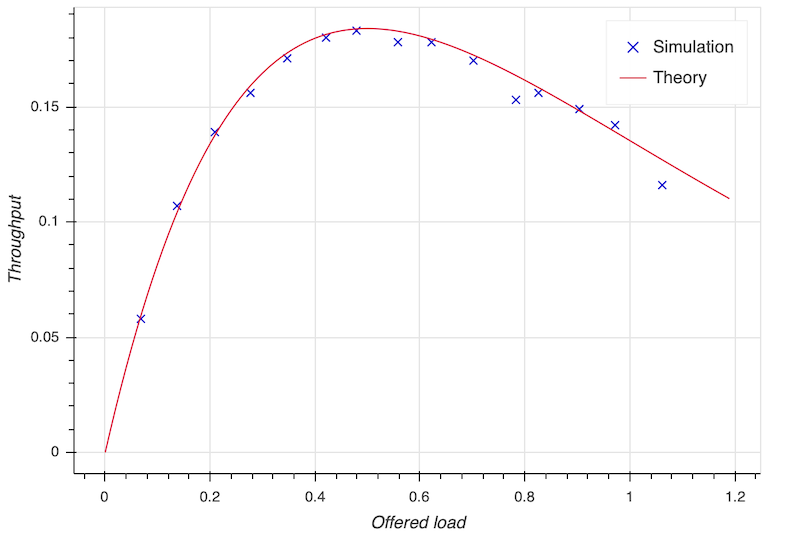

As expected from the ALOHA protocol, the maximum throughput of about 0.18 is reached at an offered load of about 0.5. We plot this against the theoretical ALOHA performance curve (

y = x exp(-2x)

) in

Figure 16

.

31.2. Logs, traces and statistics

When a simulation is run, usually two files are produced.

31.2.1. Log file

The

logs/log-0.txt

file contains detailed text logs from the Java logging framework. Your agents and simulation scripts may log additional information to this file using

log.info()

or

log.fine()

methods. This provides a flexible and customizable way to log events in your simulation for later analysis.

A typical extract of the log file is shown below:

1569242004546|INFO|org.arl.unet.nodeinfo.NodeInfo@558:setAddress|Node address changed to 1

1569242004548|INFO|Script1@558:invoke|Created static node 1 (1) @ [0, 0, 0]

1569242004552|INFO|org.arl.unet.nodeinfo.NodeInfo@558:setAddress|Node address changed to 2

1569242004553|INFO|Script1@558:invoke|Created static node 2 (2) @ [0, 0, 0]

1569242004553|INFO|org.arl.unet.nodeinfo.NodeInfo@558:setAddress|Node address changed to 3

1569242004554|INFO|Script1@558:invoke|Created static node 3 (3) @ [0, 0, 0]

1569242004554|INFO|org.arl.unet.nodeinfo.NodeInfo@558:setAddress|Node address changed to 4

1569242004554|INFO|Script1@558:invoke|Created static node 4 (4) @ [0, 0, 0]

1569242004555|INFO|Script1@558:invoke| --- BEGIN SIMULATION #1 ---

0|INFO|org.arl.unet.sim.SimulationContainer@558:init|Initializing agents...

0|INFO|org.arl.unet.sim.SimulationAgent/1@561:invoke|Loading simulator : SimulationAgent

0|INFO|org.arl.unet.nodeinfo.NodeInfo/1@560:init|Loading agent node v3.0

0|INFO|org.arl.unet.sim.HalfDuplexModem/1@559:init|Loading agent phy v3.0

:

:

5673|INFO|org.arl.unet.sim.SimulationAgent/4@570:call|TxFrameNtf:INFORM[type:DATA txTime:2066947222]

6511|INFO|org.arl.unet.sim.SimulationAgent/3@567:call|TxFrameNtf:INFORM[type:DATA txTime:1157370743]

10919|INFO|org.arl.unet.sim.SimulationAgent/4@570:call|TxFrameNtf:INFORM[type:DATA txTime:2072193222Note that the timestamp (first column) changes from the clock time to discrete event time when the simulation starts, and switches back to clock time when the simulation ends.

31.3. Trace files

31.4. JSON trace file

Since UnetStack 3.3.0, the default trace file is stored in a rich JSON format.

When running a simulation, a JSON trace file

logs/trace.json

is automatically generated. This file contains a detailed trace for every event in the network stack, on each node. You can even enable trace file generation on real modems and other Unet nodes (using

EventTracer.enable()

), and later combine the traces from multiple nodes to analyze network protocol operation and performance.

A small extract from a typical trace file is shown below:

{"version": "1.0","group":"EventTrace","events":[

{"group":"SIMULATION 1","events":[

{"time":1617877446718,"component":"arp::org.arl.unet.addr.AddressResolution/B","threadID":"0bfb305d-4920-4df0-af95-5282b048b5ec","stimulus":{"clazz":"org.arl.unet.addr.AddressAllocReq","messageID":"0bfb305d-4920-4df0-af95-5282b048b5ec","performative":"REQUEST","sender":"node","recipient":"arp"},"response":{"clazz":"org.arl.unet.addr.AddressAllocRsp","messageID":"3e421e28-89ca-44ec-bc65-16cc404d3703","performative":"INFORM","recipient":"node"}},

{"time":1617877446718,"component":"arp::org.arl.unet.addr.AddressResolution/A","threadID":"04f5b1b9-9178-4e27-aae7-e2a0c4ffcd89","stimulus":{"clazz":"org.arl.unet.addr.AddressAllocReq","messageID":"04f5b1b9-9178-4e27-aae7-e2a0c4ffcd89","performative":"REQUEST","sender":"node","recipient":"arp"},"response":{"clazz":"org.arl.unet.addr.AddressAllocRsp","messageID":"e0fe806d-625d-4261-b24c-6655b90cc06a","performative":"INFORM","recipient":"node"}},

:

:

]}

]}The trace is organized into a hierarchy of groups, each describing a simulation run or the execution of specific commands. A group consists of a sequence of events, with each event providing information on time of event, component (agent running on a node), thread ID, stimulus and response. The stimulus is typically a message received from another agent, and response a message sent to another agent. The thread ID ties multiple events, potentially across multiple agents and nodes, but with the same root cause together.

An experimental automated trace analysis tool can be used to produce sequence diagrams from JSON trace files.

Integrating the event tracing framework into your own agents is simple. All you need to do is to wrap messages that you generate in response to a stimulus with a

trace()

call. Some examples:

send trace(stimulus, new DatagramDeliveryNtf(stimulus))

request trace(stimulus, req), timeout31.5. Legacy trace file

The legacy trace file format is similar to the NS2 NAM trace. Since UnetStack 3.3.0, this format is no longer the default, but can be enabled easily in your simulation script if you need it:

trace.open(new File(home, 'logs/trace.nam'))The trace file contains information about all packet creation, transmission, reception and drop events. It also contains details of node motion. The tracer also computes basic statistics including queued packet count, transmitted packet count, received packet count, dropped packet count, offered load, actual load, average packet latency and normalized throughput. An extract from the trace file is shown below:

# BEGIN SIMULATION 1

n -t 8.005000 -s 3 -x 0.000000 -y 0.000000 -Z 0.000000 -a 3

+ -t 8.005000 -s 3 -d 2 -i 40839989 -p 0 -x {3.0 2.0 -1 ------- null}

- -t 8.005000 -s 3 -d 2 -i 40839989 -p 0 -x {3.0 2.0 -1 ------- null}

n -t 8.005000 -s 1 -x 0.000000 -y 0.000000 -Z 0.000000 -a 1

n -t 8.005000 -s 2 -x 0.000000 -y 0.000000 -Z 0.000000 -a 2

n -t 8.005000 -s 4 -x 0.000000 -y 0.000000 -Z 0.000000 -a 4

r -t 9.005000 -s 3 -d 2 -i 40839989 -p 0 -x {3.0 2.0 -1 ------- null}

r -t 9.005000 -s 3 -d 1 -i 40839989 -p 0 -x {3.0 2.0 -1 ------- null}

r -t 9.005000 -s 3 -d 4 -i 40839989 -p 0 -x {3.0 2.0 -1 ------- null}

+ -t 42.042000 -s 1 -d 2 -i 254433913 -p 0 -x {1.0 2.0 -1 ------- null}

- -t 42.042000 -s 1 -d 2 -i 254433913 -p 0 -x {1.0 2.0 -1 ------- null}

r -t 43.042000 -s 1 -d 2 -i 254433913 -p 0 -x {1.0 2.0 -1 ------- null}

r -t 43.042000 -s 1 -d 4 -i 254433913 -p 0 -x {1.0 2.0 -1 ------- null}

r -t 43.042000 -s 1 -d 3 -i 254433913 -p 0 -x {1.0 2.0 -1 ------- null}

:

:

d -t 584.925000 -s 1 -d 4 -i 259068939 -p 0 -x {1.0 4.0 -1 ------- null} -y CLEAR

+ -t 584.925000 -s 4 -d 1 -i -2069119004 -p 0 -x {4.0 1.0 -1 ------- null}

- -t 584.925000 -s 4 -d 1 -i -2069119004 -p 0 -x {4.0 1.0 -1 ------- null}

d -t 584.925000 -s 4 -d 1 -i -2069119004 -p 0 -x {4.0 1.0 -1 ------- null} -y COLLISION

d -t 584.925000 -s 4 -d 2 -i -2069119004 -p 0 -x {4.0 1.0 -1 ------- null} -y COLLISION

d -t 584.925000 -s 4 -d 3 -i -2069119004 -p 0 -x {4.0 1.0 -1 ------- null} -y COLLISION

d -t 585.747000 -s 1 -d 2 -i 259068939 -p 0 -x {1.0 4.0 -1 ------- null} -y BAD_FRAME

d -t 585.747000 -s 1 -d 3 -i 259068939 -p 0 -x {1.0 4.0 -1 ------- null} -y BAD_FRAME

:

:

# STATS: q=621, t=621, r=506, d=115, O=0.099, L=0.099, D=0.000, T=0.080

# END SIMULATION 1

Lines starting with

n

log node locations/motion. Lines starting with

+

denote packet arrival into the transmit queue. Lines starting with

-

log packet removal from the transmit queue, i.e., transmission. Lines starting with

r

denote packet reception (or overhearing). Lines starting with

d

log packet drops, and specify a reason for the drop.

CLEAR

indicates a packet transmission/reception abort due to a

ClearReq

request.

COLLISION

indicates that the packet was dropped because the node was busy receiving or transmitting another packet.

BAD_FRAME

indicates that the packet was corrupted (possibly due to interference from a colliding packet).

For more details on the trace file format, see NS2 NAM trace format .

| While the trace provides a simple file format and collects statistics for you, the events monitored by the legacy trace are currently limited to PHYSICAL service events. If you need to monitor or log events from other agents, you would want to use the JSON trace file. |

| <<< [Writing simulation scripts] | [Modems and channel models] >>> |